#90 How Microsoft's AI Innovation Officer Actually Uses AI | Dr. Michael J. Jabbour on Thinking, Not Just Tools

“Not because it’s helpful… it might be irresponsible for me not to.”

Listen to this episode on Apple Podcasts, Spotify, Youtube or your favorite podcast platform.

Ignoring AI is not an option.

Do nothing, and AI will change your brain.

A study followed London taxi drivers using GPS. Their brains physically changed—parts actually shrunk compared to drivers who didn't use GPS.

The good news: you can direct the process. You can use AI to be better. And do good.

I sat down with Michael Jabbour - my friend for 20 years. As the AI Innovation Officer at Microsoft, Michael has front-row seats inside Microsoft. What impresses me most is how he hasn't lost his soul.

Michael uses AI for 70% of his work. That didn't surprise me. The next thing he said gave me pause.

"Not because it's faster. Because it would be irresponsible not to."

I've been using AI a lot. And as much as I know, this conversation really got me thinking.

The wrong question is: "Should I use AI?"

The better question is: How do we protect our critical thinking when we're using the most powerful tool since fire?

EPISODE HIGHLIGHTS

In this conversation, Michael and I explore:

Why doing nothing means getting dumber (and how to direct the change)

Michael explains why AI isn't like the Industrial Revolution—it's like when humans discovered fire. Your brain is physically changing whether you use AI or not.Three modes most people miss: assistant, partner, explorer

How to shift from using AI as a simple tool to using it as a thinking partner that challenges you and helps you explore new possibilities.Why Michael uses multiple AI models (Copilot, Claude, ChatGPT) - and which prompts make AI teach instead of tell

The specific strategies Michael uses to ensure AI enhances his critical thinking rather than replacing it.How to harness pervasive AI tech without letting it become invasive

Drawing the line between AI that empowers you and AI that diminishes your humanity.

TIMESTAMPS

00:00 - Intro: AI is changing your brain

00:31 - Meet Michael Jabbour: 20-year friendship, front-row seat at Microsoft

01:51 - The human connection behind the technology

02:40 - What doesn't show up on Michael's resume

03:30 - Microsoft's mission: Empowering every person on the planet

04:12 - Why AI isn't like the Industrial Revolution—it's like discovering fire

05:04 - London taxi drivers: How GPS physically changed their brains

07:37 - What's changing in work, creativity, and education

09:53 - Skills that become premium vs. what's being offloaded

10:06 - University dean's confession: "I created students who can't handle ambiguity"

12:16 - Competency vs. capability: The critical difference

14:30 - Using AI 70% of the time (responsibly, not efficiently)

18:45 - Three modes: Assistant, partner, explorer

23:12 - Which AI models Michael uses and why

28:30 - Prompts that make AI teach instead of tell

35:20 - Pervasive vs. invasive: Drawing the line

42:15 - AI isn't the enemy—losing our humanity is

48:30 - How to use AI for good

Subscribe to TranscendingX 🎧

ABOUT OUR GUEST

Dr. Michael J. Jabbour is the AI Innovation Officer in Microsoft's Office of the CTO, where he advances pioneering research in human-AI collaboration across education, medicine, engineering, and other critical fields. His current work spans biologically inspired AI models, memory, metacognition, agency, and the future of work—empowering individuals and organizations globally to harness the transformative potential of artificial intelligence. A recognized leader in large-scale organizational transformation, Dr. Jabbour brings decades of experience across artificial intelligence, human-centered and agile development, and healthcare. He previously served as CIO/CTO for several New York City agencies, including the NYC Department of Education, where he secured significant innovation funding and developed programs benefiting millions of users. A regular guest lecturer at top universities, he bridges the worlds of industry and academia, making complex technical concepts accessible and actionable.

CONNECT WITH MICHAEL J. JABBOUR

ABOUT OUR HOST

Uri Schneider, MA CCC-SLP is the founder of Schneider Speech - leading a boutique practice of specialists treating stuttering and communication disorders. He's also an executive coach focused on communication and leadership, helping individuals and organizations see the invisible patterns slowing them down.

With 25 years as a clinician, business leader, and former faculty at UC Riverside School of Medicine, Uri connects science, business, and real-life experience to show how better communication helps us think more clearly, decide faster, and take stronger action.

Uri hosts the TranscendingX podcast to reveal how everyday people transcend adversity - listening for what's not being said and making the invisible visible.

Uri lives with his wife and four kids. When he's not working, you can find him running outdoors.

Schneider Speech:schneiderspeech.com

Coaching with Uri Schneider:urischneider.com

Resources:

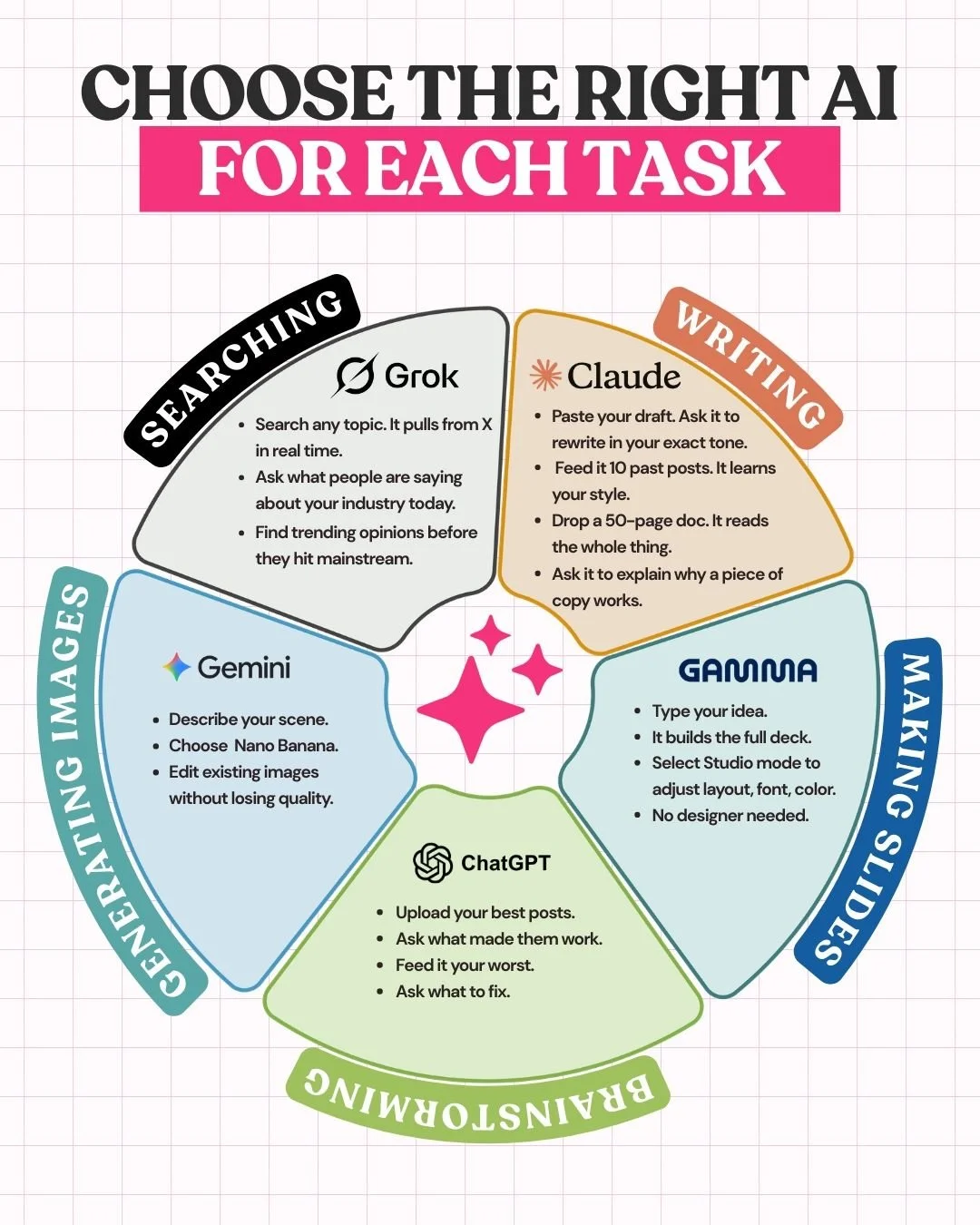

AI models from this episode with links to tutorials:

Microsoft Copilot - Video Tutorials

Claude - Anthropic Skilljar Tutorials

ChatGPT - Tutorial

Suno - How to make a song with Suno

Songsrcription - Turn your audio into sheet music

Learn How to AI - Substack from Ruben Hasid

Image by Ruben Hassid

FULL TRANSCRIPT

[00:00:00] Michael Jabbour: Not because it's helpful, because it might be irresponsible for me not to.

The Industrial Revolution it's probably not the best metaphor I would go back to something closer to when humans met fire, where we experienced biological change as a result of some external stimulus.

famous example of cabbies in London where they saw a shrinkage of certain parts of their brain physically when they were using GPS versus ones that were not using GPS.

[00:00:31] Uri Schneider: Michael Jabbour is a friend of mine for more than 20 years, but I gotta say, when I met him 20 years ago, I had no idea what he would be doing today. He's a thought leader, he's an AI innovation officer at Microsoft in the office of the CTO, we have a conversation that's not a technical conversation about AI.

But it brings the soul of humanity to the intersection with AI and the cusp of all the technology that's unfolding all around us. This conversation sheds light on creativity. Work, education, and how all of us can use not just one model, but multiple models to make ourselves not only more productive, but more creative.

One of the things that comes outta this conversation is that he uses AI for about 70% of his work during the day, not because he's trying to get it done more efficiently, but because he feels it would be. Irresponsible not to use AI in the work that he does on a day-to-day basis. Listen to this conversation and see how you can use AI not just to be more productive, but to be more responsible and make a bigger impact in the world.

You should have brought your guitar.

[00:01:40] Michael Jabbour: I was thinking about it.

[00:01:42] Uri Schneider: That would've been awesome.

Alright, Michael. MJ,

[00:01:48] Michael Jabbour: good to be with you.

Been a long, long time.

[00:01:51] Uri Schneider: This was on short notice. The opportunity struck and in, you know, true fashion, we show up and we make it happen. For the guy who's the AI innovation officer, this is just another example. Like, sure, I could be in SOHO on 24 hours notice. No problem. But that's, that speaks to the human connection that, that we share.

Yeah, and that, I think I wanna really highlight, I think at the beginning of this year, the beginning of 2025, everyone was terrified that AI was taking over. There was a feeling of. Of being overwhelmed, of being swept up, of scarcity, of fear. And I think here we are at the end of 2025 and I think the vibe is very, very different.

So I hope we can get into that and talk about what's happening. But most importantly, what's something that doesn't show up on your resume that you'd want people to know about MJ?

[00:02:40] Michael Jabbour: I mean, I love humans. I of all, all ages, I am a, a person that just. Loves the experience of life. Um, both its, uh, challenges as well as its benefits, and being able to lean into that wherever I can is a huge opportunity for me.

Um, so it doesn't matter whether it's AI or it's trying to figure out, um, how to repair some part of someone's body, um, or mind, but it's, it's important and it's something that is, uh, been a thread throughout my entire life.

[00:03:22] Uri Schneider: What's your job behind all these, uh, words? Innovation officer at the office of the CTO at Microsoft?

What's your role?

[00:03:30] Michael Jabbour: My, my job is the same job as anyone else at Microsoft. All several hundred thousand employees, which is, uh, to. To ensure that we are successful as humans, right? To empower every person and organization on the planet to achieve more. That's our mission. Um, and so making Microsoft successful, um, is really making the world successful.

Um, I love that about where I'm working now.

[00:03:52] Uri Schneider: So if we think about AI and the time that we're living in and kind of framing it in other revolutions that the world has gone through, the Industrial revolution and others. Can you give us a little bit of perspective, set the stage of kind of like what we're going through, what's already kind of come through the world, where we stand today, and kind of what to expect is coming next?

[00:04:12] Michael Jabbour: Sure. The, uh, the The Industrial Revolution is probably a. It's probably not the best metaphor I would say for where we are right now. I would go back to something closer to when humans met fire, that kind of thing, where we experienced biological change as a result of some external stimulus. And that's very much where we are right now, where you're going to see changes.

So, um, at the very basic introduction of, uh, AI and technology to humans, you can go back to a very famous example of cabbies that they tested in London where they saw a shrinkage of certain parts of their brain physically when they were using GPS versus ones that were not using GPS.

[00:04:59] Uri Schneider: So the brain. And the neural networks in the brain actually changed as a result of using GPS technology.

[00:05:04] Michael Jabbour: Well, parts of the brain shrunk. The atrophied actually. So the neural networks is somewhat of a more complex, non-deterministic beast. But, um, but yes, physical change as a result of technology influence. Um, and we're. In a place right now where that is scaling exponentially. In, in, I

[00:05:27] Uri Schneider: think when we were, when we met each other, we still remembered people's phone numbers.

[00:05:30] Michael Jabbour: Yes. That

[00:05:30] Uri Schneider: would be like another example.

[00:05:32] Michael Jabbour: Yes, that's right. Well, you remembered those string. The string of digits. Yes. You still remember their names now, but now you're dialed by name instead of dialed by number. That's

right.

[00:05:40] Michael Jabbour: Right. So that transition was also interesting, but it was, uh, an easier one than the one that we're going through right now.

Right. During the industrial revolution, since you brought it up, um, you, you did see pretty big changes in how manufacturing was occurring, how precision was achieved, um, what it, what different types of labor could do different types of work. And a, a lot of our system today, uh, is heavily oriented around exactly what that was versus where we are today, right?

So even school. Is less like education, which prepares us for, um, ambiguity and situations that we can't prepare for versus training, which is situations that we can prepare for. Right? So ēducāre, the Latin etymology for education means to pull out, to extract a person's highest potential versus pushing in.

And so the idea was to push in the this knowledge, skills, and abilities to enable someone to reliably do something, um, in that line of achieving scale and precision at scale. Um, that's industrial revolution, right? Keep in mind it took 50 years for electricity to be widely distributed across the world.

Here you had ChatGPT going to hundreds of millions of users within months, and now you've got a capability. Um, like diagnosis, for example, for a physician.

[00:07:02] Uri Schneider: Have we talked

[00:07:02] Michael Jabbour: about, um, that might actually scale and become available to every person on the planet, uh, regardless of their location, um, their means or their even their technology access.

[00:07:12] Uri Schneider: So framing it when humans come in contact with fire, it actually changes the human biology.

[00:07:18] Michael Jabbour: Yep.

[00:07:19] Uri Schneider: Um, so what, where are we at? What's come into play in the past couple years? Where do we stand and what's next in this? Process and then we can talk about in what ways it's affecting us as humans. I really enjoyed our conversation about the way we think and the way we operate has to grow and shift.

[00:07:37] Michael Jabbour: Yeah. Uh, what changes occurred is still being studied. So I don't want necessarily lean into, um. The current state of science because it's evolving so fast right now. Um, and even our ability to detect what's changing is still, um, we're still entry level at that point. Um, but what's changing in terms of work has already, um, made pretty drastic moves.

So in where it comes down to, uh, let's just take a simple example of the phone. The iPhone, right? So the iPhone basically turned everyone into a photographer. It didn't say, okay, yes, you had to have a DSLR and you had to have X thousands of hours of training, and you had this much exposure to these types of subjects, and so now everyone is a photographer.

Right now when you think about something like making music, for example, like I took some music that I recorded from 20 years ago, which you've heard and um, dropped it into Suno, for example, and it just did amazing things with it. Like I had never been able to hear my music, um, with like actual real, you know, drums and an ud and like all different types of instruments that I've always wanted to layer in there.

[00:08:52] Uri Schneider: Not to mention extracting them sheet music with an app like Songify.

[00:08:56] Michael Jabbour: Exactly right. That's right. You can now extract sheet music. You can bring that sheet music to life. Um, you could iterate with an AI on it. And so. That idea of enabling every person to be successful in these really incredible ways is absolutely part one of that.

Part two is that jobs will change, right? It doesn't mean that jobs necessarily go away, but they're definitely changing. And, uh, you know, for example, uh, you're not gonna have like a string of typewriters necessarily. Uh, that are a available to you for, for typing in some sort of, uh, um, editing studio.

You're going to have, um, ais that are even typing the typewriters for you, so to speak. And so you're, when we look at each of these disciplines, um, whether it's creative or tactical, technical work, you're going to see, um, ais be able to learn it. Why? Because AIS will excel at training. They're going to excel less at educating education related topics.

[00:09:53] Uri Schneider: So talk to us about the education. How do people have to think differently? What skills become more of a premium? Sure. What things are being offloaded and what things need to be flexed so that we can succeed?

[00:10:06] Michael Jabbour: Well, I mean, I'll give a paraphrase or semi quote of one of the, uh, universities I spoke to, um, spoke at a great university recently.

I was talking to the grad students and the student dean was there. And after my keynote, she was like, that was crazy. And I was like, sorry if I over provoked the students. And she was like, no, no, your presentation was great. She was like, I, I'm talking about the students. She was like, I, it was the first time in a long time that I felt sad.

She tells me, because I watched the level of ambiguity that you were throwing at them and their ability to tolerate it was so low. And I created that. Wow. And it clicked for me. A, a lot of things clicked when I heard her say that because she was able to see a system in flux and, and be able to pivot where most people actually will not be able to see it until it's sort of already passed that moment.

Right. But you're in this. School, you're in this, um, system of learning. It doesn't matter, like necessarily like the age range. Uh, but what are we really spending our time with and teaching, um, these students? Like how are we helping them? Are we helping them to self-learn? So the time that we spend with them, um, is going to be about mentoring and coaching and guiding and extracting their highest potential.

So a lot of open questions around like what education is, what it should be. There's some fascinating examples also of schools that have taken this very seriously. Um, some of the research that, uh, Microsoft, just as a, to give you some lead in there that, that we produced, we did a study in Nigeria with World Bank.

Um, on education methods and we're looking at controlled trial where we were looking at, um, a virtual teacher copilot use and educational outcomes. And what we found was that six weeks of copilot use was equivalent to two years of human instruction as far as gained educational outcomes.

[00:12:14] Uri Schneider: Explain that in simple English.

What did that look like?

[00:12:16] Michael Jabbour: So competency, basically just being,

[00:12:20] Uri Schneider: what was the co-piloting? What does co-piloting look like?

[00:12:23] Michael Jabbour: So copilot, a copilot would be, um, working with an AI assistant of some sort that is helping you and guide you, right? So if you're as

[00:12:30] Uri Schneider: learner as the instructor, both,

[00:12:32] Michael Jabbour: both, right? But let's just, you, let's focus on the learner for now.

That was really what the focus of the study was on. Um, so the learner can ask a simple question and just say, Hey, teach me, um, linear algebra. It's very broad topic. So the AI has come back and ask you like, okay, like what area of liter linear algebra do you wanna learn? Um, or something specific like angular momentum in physics.

Uh, looking at the moment of inertia and angular velocity and being able to understand rotational force in that way. And so. When you're engaging with this ai, you're really asking it for, for it to assist you with a very specific topic. In some cases, very specific, like, help me push this button on my computer.

In other cases it's teach me a topic. In other cases, it's guide me through this epic of topics.

[00:13:22] Uri Schneider: So take this study that you talked about that you did in Nigeria. Was it Nigeria?

[00:13:26] Michael Jabbour: Yeah.

[00:13:27] Uri Schneider: What, what degree of instruction or training or experimentation was there with the prompting? Meaning how much did the learner have to know how to prompt to get the best outta the AI versus how much was it kind of relied upon the AI to, to drive the show?

[00:13:43] Michael Jabbour: So, it's a great question. I'd have to go back and look at the study to confirm, but I even

[00:13:47] Uri Schneider: theoretically

[00:13:47] Michael Jabbour: not to this, but I believe that it wasn't based on the student prompting at all. Yeah. Like I don't think that we were running trainings in order to guide their behaviors. Right now my kids, I do, I do teach them at least some basics that they can remember all the time.

You know, sometimes like you spend time like exercising and then you're like, I can't remember what I'm supposed to do right now. So you need like that one or two exercises that you like carry with you. So, um, for my older kids, I tell them that they should tell the ais to put the cognitive and emotional load on me before their prompts.

And funny story, I will not mention which child. Um, but uh, I can tell which children are using that prompt and which ones are not and when, but my, what

[00:14:29] Uri Schneider: does it look like? What's the difference?

[00:14:30] Michael Jabbour: So you can, you can, what

[00:14:31] Uri Schneider: does the prompt look like?

[00:14:32] Michael Jabbour: So you can

[00:14:33] Uri Schneider: see, and then what does the output look like?

[00:14:33] Michael Jabbour: Well, I look at their prompts, so I got their outputs.

[00:14:35] Uri Schneider: Yeah.

[00:14:35] Michael Jabbour: Uh, so you can see in the outputs that their ability to think critically about a topic. 'cause I would be able to challenge them. Like, is this what you really agree with? Mm-hmm. Like you wrote something. Is this what you agree with? 'cause this doesn't sound like the person that I know right now. The writing was on par because they could, the students can now get the writing to be in their language.

[00:14:54] Uri Schneider: Mm-hmm.

[00:14:55] Michael Jabbour: They can get it to be at their level, their persona,

[00:14:57] Uri Schneider: their style.

[00:14:58] Michael Jabbour: Right. So to be able to detect like this child's writing, um, over an, over the, the child's regular writing, their ai writing versus regular, very difficult. And that, that's only gonna,

[00:15:08] Uri Schneider: that's in terms of form.

[00:15:10] Michael Jabbour: Style, form, form, form, style,

[00:15:12] Uri Schneider: but not substance.

What you're saying is in terms of substance, is this what you agree with? Are these the ideas?

[00:15:16] Michael Jabbour: I know my children's minds and what's in their hearts to some degree. Right? So like I can. I can sense when something is off now a teacher maybe not so much anymore, it's gonna be more and more difficult for them to teach like in that way.

My 5-year-old though, I have to give her a little bit different type of instruction 'cause she was born about a week before, uh, pandemic shutdowns here in New York City. That was totally fun. Um,

[00:15:43] Uri Schneider: that was sarcastic. Here's the laugh.

[00:15:44] Michael Jabbour: Fun. Right? Um, and, uh, you know, she grew up talking to Alexa, Siri, remote controls.

You know, she, she demands that those machines respond to her in a meaningful way. And, you know, if Moana doesn't show up when she calls it, like all hell will break out in the house. That, and she, 'cause her expectations are so high. Um, but when I see her engaging with the AI, I tell her. To tell the AI to teach me and not tell me.

[00:16:13] Uri Schneider: Hmm.

[00:16:15] Michael Jabbour: And so even if it says something to her, I have to help make sure that she is continuously going through that process of saying, you know, not just what is the answer to this question, but teach me. Right? Give me, guide me, grow me.

[00:16:28] Uri Schneider: So many people. I find we'll talk about this, this great article that you wrote, uh, on your substack, which is amazing.

All the articles are amazing. Thank you. Thank very thought provoking and very. Pretty helpful for framing, like how to approach and think about this whole time and this whole technology that's evolving, the different ways people engage. Like, so even like Google, right? Google search. I grew into the time where Google Search became a thing and I watched how different people were able to find more good content that they were looking for, and other people would find content that it wasn't what they were looking for.

And a lot of it had to do with. Understanding, like how do you type in a search query? So when you talk about your five-year-old saying like, instead of tell me, teach me. So like what is, what is the prompt, what's the difference in the prompt? If you could like create that contrast in the way that you see young adults, whether they're students, professionals, how are they using, let's say even open source, you know, ChatGPT, and Claude and Microsoft.

[00:17:31] Michael Jabbour: So let's talk about the search engine for my older kids for a moment, because. I think that their transformation, their change was very noticeable with my daughter. It was basically second nature. And I have so many funny stories about her interactions, but, um, my older kids, they've totally transitioned out the search for information to the search for answers.

[00:17:52] Uri Schneider: Mm-hmm.

[00:17:53] Michael Jabbour: So even, um, for a lot of us, we might naturally go into like, um, a Bing or a Google or some search engine and or even ChatGPT, and we would search. In that limited way for information,

[00:18:08] Uri Schneider: right

[00:18:08] Michael Jabbour: Answers. What's a good kids

[00:18:09] Uri Schneider: movie for the weekend?

[00:18:10] Michael Jabbour: Basic information, right? Can you gimme information that you have on this type of skin condition?

[00:18:16] Uri Schneider: Right?

[00:18:16] Michael Jabbour: Sure. I can get you information on that. Right? So these searches, search for information. Search for information versus the search for answers. So when you're asking a question like, how do I solve this problem?

[00:18:30] Uri Schneider: Mm-hmm.

[00:18:31] Michael Jabbour: That's a very different type of search,

[00:18:33] Uri Schneider: or I need to be in this city for this presentation at this time, and I have this other constraint.

What's the best, you know, what would be some solutions or ways that I could go that would save time and effort? And

[00:18:43] Michael Jabbour: that is a search for an answer. Right. So you want someone to go in and do that work for you. So normally you would ask like your, your friend, your spouse, your assistant, your child to, to help you, um, to match the constraints because it's constraints.

Like who wants to do, you know, constraints, satisfaction, like that is like the worst type of exercise. And I know a lot of people who are really good at that, but, but it's a really difficult exercise. But if you ask an AI agent to do that for you, you're already in a totally different, um, world. So, uh, you mentioned substack.

There are three different types of modes that I commonly find people falling into. Um, one is assistant mode

[00:19:25] Uri Schneider: assistant.

[00:19:26] Michael Jabbour: So assistant mode is that search for answers. I need someone to do a task or tasks for me on my behalf in order to make my life easier. So, hey, you assistant, go do those things for me.

[00:19:40] Uri Schneider: What are three good coffee places for MJ and me to get a coffee after this?

[00:19:44] Michael Jabbour: Right, like as an example or, you know, re research these three articles on the Microsoft study and tell me, summarize this, what their focus was.

[00:19:54] Uri Schneider: Summarize this article for me. E

[00:19:55] Michael Jabbour: exactly. Summarize article like those are, those are tasks that your assistant can do for you.

It's kind of blind and a little bit dumb sometimes, depending on how you go about your work with an ai. Uh, but that's an assistant. Uh, a basic task doer on your behalf. Then there's partner mode.

[00:20:16] Uri Schneider: Partner mode.

[00:20:17] Michael Jabbour: Partner mode, right? So you can think about it just like a partner in business, partner in life, partner in marriage, right?

You work together to achieve something.

[00:20:26] Uri Schneider: I remember the first time my brother told me, yeah, you know, Chat and I, we worked on this plan together.

[00:20:32] Michael Jabbour: Yeah.

[00:20:33] Uri Schneider: And it was, I can tell you exactly what it was. I think it was Rosh Hashanah and we were sitting there talking and I thought it was the craziest thing in the world.

And then I found myself on a flight on the way to a conference, and I need to finish the slides. And not only did I need to finish the slides, but I said, you know. I wanna stop working to perfect everything. I just wanna get this done in 20 minutes. Help me frame the following concepts into 30 slides, gimme some suggestions.

And we were literally working together and asking it to prompt me with questions along the way to check and see. And what I ended up delivering was the best presentation I ever gave, and it was the most efficient preparation because I got outta my own way and I had a partner. And then I turned back to my brother and I'm like.

Thank you. I laughed in your face when you were talking about it with like this anthropomorphic kind of attribution. But it totally, totally, totally was like a great partner for me.

[00:21:28] Michael Jabbour: Yes.

[00:21:28] Uri Schneider: Would that be an application of what you're talking

[00:21:29] Michael Jabbour: about? Perfect application. Excellent. I, you know, way to think about it.

And, um, but I want to extend also your idea around partner. Help me think about an idea that doesn't exist. Yes. Help me. Um, help me think about something that's very difficult for me.

[00:21:46] Uri Schneider: Yeah.

[00:21:47] Michael Jabbour: Right. So now you're, you're almost in like this therapeutic relationship.

[00:21:50] Uri Schneider: Yes, yes, yes. I've done that as well. I would say like.

I know I have a tendency to get really theoretical. Yes. Challenge all the places where I'm getting really abstract and challenge me to come up with practical applications for the audience. Make sure I don't drift.

[00:22:05] Michael Jabbour: Hey, it's, it's a great, that's another great example,

[00:22:07] Uri Schneider: right? Or here, take this piece of writing and show me all the places that I need to challenge where my ego is getting into this.

[00:22:12] Michael Jabbour: Yeah. Hey, I'll give you a specific prompt that you can use,

[00:22:15] Uri Schneider: please.

[00:22:15] Michael Jabbour: So, um, some of my articles, uh, I wander. And I, I have thoughts that are seemingly disconnected or extremely disconnected that I know belong together, but they're hard for me to pull it together. And so, um, that's where I spend a lot of time.

But I will tell, after I've written down some of my thoughts, I will ask a separate ai, not the AI that maybe assisted me with research or something and say, take a look at this article,

[00:22:41] Uri Schneider: just to break that down for someone who doesn't follow what you just said. A separate AI. So just give a practical

[00:22:46] Michael Jabbour: example.

Yeah. So let's say I use copilot, Microsoft copilot to draft an article, and then I use ChatGPT to check an article, and then I might use Claude as a third opinion.

[00:22:56] Uri Schneider: Mm-hmm.

[00:22:57] Michael Jabbour: Right? So one second or third opinion. Um, ai you could put it so it doesn't have any context. Uh, now sometimes you can easily do that just by opening up a new session.

Right. But with people, a lot of people have memories turned on now, so it gets a little bit. Poisoned the context. Mm-hmm. Gets poisoned. Mm-hmm. But if you use a third party ai, so a

[00:23:15] Uri Schneider: confirmation bias starts to be poisoned.

[00:23:17] Michael Jabbour: Something like that. Yes. So let's say you go to another AI and you say, here's my article.

Check if it is deductively and inductively sound. And then after that's gone through, ask it what the first principles are of that article and check if I've really delivered on communicating those first principles.

[00:23:37] Uri Schneider: Wow.

[00:23:39] Michael Jabbour: I mean, you'll get feedback that you don't love. But,

[00:23:42] Uri Schneider: but then at least you're aware.

[00:23:43] Michael Jabbour: Yes. At least you're aware. And at least

[00:23:45] Uri Schneider: you, and then you can make a decision. You have some critical thinking to do.

[00:23:47] Michael Jabbour: That's correct.

[00:23:48] Uri Schneider: Do I wanna just deliver what I just did or do I wanna

[00:23:51] Michael Jabbour: Yeah.

[00:23:51] Uri Schneider: Level it up

[00:23:52] Michael Jabbour: and sometimes I will ignore the, the Sure. The suggestions

[00:23:55] Uri Schneider: and I've done that.

[00:23:56] Michael Jabbour: Yeah. But, but I think if it's, um, a pure, logical fallacy that I've communicated something that.

It doesn't make sense or doesn't connect in any way. Like yeah, there's a, it, it's as if you're visually looking at text and there's a huge gaping hole.

[00:24:10] Uri Schneider: Yeah.

[00:24:11] Michael Jabbour: So I can't see that hole sometimes. Yeah. We're

[00:24:12] Uri Schneider: blind.

[00:24:13] Michael Jabbour: But the ai, I see them very, very easily. Ai Yeah, that's right.

[00:24:16] Uri Schneider: Love

[00:24:16] Michael Jabbour: it. They partner with you.

Okay. So,

[00:24:18] Uri Schneider: so those applications cover the range of what you're thinking of in partner mode?

[00:24:21] Michael Jabbour: I mean, I, I think so. For the most part. I just, I would want to make sure that you would include some level of, um. Pushing the models in ways that might seem like you could only push a human in that way. Like what?

So partners will, will satisfy that. So an example would be, um, uh, I, I totally don't agree and, um, don't understand what it is that you're communicating with me and I need you to work with me. Like, teach me, explain to me, guide me down the path of your thinking. Show me your sources.

[00:24:55] Uri Schneider: Yes.

[00:24:55] Michael Jabbour: You're not gonna do that to a search engine, right?

[00:24:57] Uri Schneider: Right.

[00:24:58] Michael Jabbour: So you're not even, you're not

[00:24:59] Uri Schneider: even, it's fact, it's, it's it's fact checking. It's checking hallucinations or skipped steps or skipped resources.

[00:25:04] Michael Jabbour: That's right. And it also might be the difference between having one assistant

[00:25:08] Uri Schneider: Yes.

[00:25:08] Michael Jabbour: Versus multiple assistants. Right. So it's the equivalent of having multiple minds.

[00:25:13] Uri Schneider: Yeah.

[00:25:13] Michael Jabbour: Multiple assistants in that loop. Right. Like I don't want one type of editor to look at my sound. I want four different types of editors. 'cause now we can

[00:25:22] Uri Schneider: afford that. I find it fascinating. We'll come back to it, but like. It's all based on mathematical frameworks and logic, and yet you would think one plus one equals two.

But when you give instructions and you say, use these three sources to provide such and such information, and then you'll read it and you'll, and I challenge and I say, well, it doesn't seem like you really used source number two at all.

[00:25:47] Michael Jabbour: Yeah,

[00:25:47] Uri Schneider: you're right. You're right. I didn't.

[00:25:51] Michael Jabbour: Yeah. So we might have come back.

That's a big topic. That's, you know, it hallucinations, but

[00:25:55] Uri Schneider: the cha but the challenging, right? There's hallucination and then there's omission. I mean

[00:25:58] Michael Jabbour: that That's right. Yeah. Well, yes. And I, I think that there's a, that's a particular type of filtering bias that occurs.

[00:26:03] Uri Schneider: Yeah.

[00:26:04] Michael Jabbour: Right. So,

[00:26:05] Uri Schneider: okay, so we got, we got assistant mode.

[00:26:07] Michael Jabbour: Yes.

[00:26:07] Uri Schneider: Partner mode.

[00:26:08] Michael Jabbour: Yep.

[00:26:08] Uri Schneider: And then

[00:26:09] Michael Jabbour: explorer mode.

[00:26:10] Uri Schneider: Oh, that sounds good. Let's explore that.

[00:26:12] Michael Jabbour: So explorer mode, uh, is great. I feel like I live probably like. 80% of my, of my current AI existence inside of Explorer mode.

[00:26:23] Uri Schneider: How much of your life do you live in your AI existence?

[00:26:25] Michael Jabbour: I, I mean, I want, I, I, I be

[00:26:27] Uri Schneider: honest,

[00:26:28] Michael Jabbour: would love to say it's very little, but it's very integrated into the way I think and solving problems.

[00:26:33] Uri Schneider: That's a very Jewish answer.

[00:26:34] Michael Jabbour: Yeah.

[00:26:35] Uri Schneider: What percentage of your waking hours or sleeping hours are ai? Um. AI experiences.

[00:26:41] Michael Jabbour: Let, let's assume that I consult with like 60 to 70% of things within AI if I can.

[00:26:48] Uri Schneider: Mm-hmm.

[00:26:49] Michael Jabbour: Why that, that's, this is the key. The key is why would I do that?

[00:26:52] Uri Schneider: Mm-hmm.

[00:26:52] Michael Jabbour: Not because it's helpful, because it might be irresponsible for me not to.

[00:26:56] Speaker 3: Hmm.

[00:26:58] Michael Jabbour: Right. So I think people have yet to hit that place in their AI use in the larger public domain where they are using it at. In a way that it is reliable enough where they're like, there's consequences for me not using this.

[00:27:16] Uri Schneider: Let's come back to that 'cause I distracted us from explorer mode. So this 70% of your whatever you run by ai, because it feels irresponsible not to

[00:27:25] Michael Jabbour: correct,

[00:27:25] Uri Schneider: but what's this explorer mode does that look like?

[00:27:27] Michael Jabbour: So the explorer, the explorer mode is the unknown. A lot of the topics that I'm, I'm exploring in my substack are, are the unknown. Like, like what, what is the, the meaning for us, um, from a human perspective? Like, is it a right, you know, is it correct for us to transition a particular type of work in, in a, at a particular speed?

Um, even my, my most recent article just past week about the something in, in, um. Mysticism that they, they talk about the, the short, long path and the long short path. Right. So the, one of the things that stimulated me to start writing about that, one of several, one of them was my wife. 'cause she's just, um, she's an incredible person and she inspires me And, um.

That's also a longer story. But I, uh, when I was thinking about this, um, the difficulty, uh, associated with growth because through friction comes growth. And without friction you have atrophy.

[00:28:31] Uri Schneider: Yeah.

[00:28:32] Michael Jabbour: And so I was wondering to myself, okay. Um, just for background, for those who don't understand, who don't know that, uh, that, uh, teaching, right.

It's, um. You have a short path. Let's say a person has two paths to choose from. One of them is just filled with poisonous thorn bushes and, um, it's very difficult to get through. It smells terrible, but it's short. It's like 10 feet long, right? Versus a long path, um, which might be a little bit windy, but smells great.

And how you're having more than enough space to walk along it. Um, and in the end, by the time you go down this long path. You get there before,

[00:29:11] Uri Schneider: yeah.

[00:29:12] Michael Jabbour: The, the short path, right? So you con

[00:29:15] Uri Schneider: and, and, and in better shape

[00:29:16] Michael Jabbour: and in better shape you're alive, right? So, um, there's a competing idea with this also from, from like, uh, some mystical backgrounds around friction.

Right. So, but to bring it down to earth, if I had to break all my patient base down, I could break them easily down into two categories. Those that can tolerate increasing doses of friction and those that cannot.

[00:29:38] Uri Schneider: Mm-hmm.

[00:29:38] Michael Jabbour: And typically those that can, will be successful in some form of therapy.

[00:29:43] Uri Schneider: Yeah.

[00:29:43] Michael Jabbour: Right.

So I was thinking to myself, okay, so friction is good, but the path, the short path seemed bad. I was like, is like, what's the role? And I'm, and I'm always struggling with our, our cognitive ideas around friction. And so I was, I wanted to explore that topic. Here's a very clear paradox. Um, a very clear conflict of ideas and conflict of, um, even the emotions associated with those ideas like.

Is it possible that AI could help me understand where I sit in those areas, what the research says about those areas, and, and to write about it, like to actually explore an a somewhat unexplored or maybe fully unexplored topic. So that's more in the philosophical domain, but what, so

[00:30:29] Uri Schneider: what did that look like?

Give us like a little window into your AI experience there.

[00:30:33] Michael Jabbour: So, for me, it looked like

me.

[00:30:37] Uri Schneider: I sense that you could tolerate friction. That's my read, but I'm not copilot

[00:30:41] Michael Jabbour: for better or worse. Like, yes, I think life set me up for being able to tolerate some level of friction.

[00:30:46] Uri Schneider: Mm-hmm.

[00:30:47] Michael Jabbour: And, um, I, uh, I've had the interesting and also challenging experiences that have, uh, enabled that for better or worse.

Um, but I would say for better, right? I, I think ultimately I became, um, a different person, a better person, um, more alive. Uh, more connected to what life actually is,

[00:31:09] Uri Schneider: comes back to what you shared at the beginning. You love humans.

[00:31:11] Michael Jabbour: I love humans, but also loving humans means that you love the mess. Like if you're looking for a cold existence where a child just doesn't break anything, doesn't, doesn't mess anything up, then, then

[00:31:23] Uri Schneider: kinda locked into assistant mode,

[00:31:24] Michael Jabbour: you're kinda locked.

Yeah. Thank you. That's exactly what it is.

[00:31:29] Uri Schneider: You gotta be ready for the abyss.

[00:31:31] Michael Jabbour: You gotta be ready. Right. Um, for the unexplored,

[00:31:33] Uri Schneider: unexpected,

[00:31:34] Michael Jabbour: most unexpected areas expect

[00:31:35] Uri Schneider: the unexpected. Totally.

[00:31:36] Michael Jabbour: Exactly.

[00:31:37] Uri Schneider: If you could do hard thing, hard things become easy.

[00:31:39] Michael Jabbour: E Exactly right.

[00:31:40] Uri Schneider: Easier.

[00:31:40] Michael Jabbour: Easier. And so, so

[00:31:42] Uri Schneider: yeah. So, so,

[00:31:42] Michael Jabbour: so this topic was, was difficult.

This, this, this, the topic was difficult for me and I was just trying to struggle with it. And, um, you know, I, because I appreciate both, so I appreciate what looks like, um, the short, long path and I appreciate what looks like the long, short path. And I was thinking about like things that are abstracting it to other areas, right?

Like education.

[00:32:04] Speaker 3: Mm.

[00:32:04] Michael Jabbour: How long do you stay in education? Is that the long, short path? So I had a lot of open questions about my own ability to wander and my own relationship with friction. And what is good friction? What is bad friction? Is it definable? Is it researchable? You know what? What has been researched already?

Like what do we know? What's the state of science, state of knowledge?

[00:32:25] Uri Schneider: So this was the back and forth you're having with your

[00:32:27] Michael Jabbour: life. Yes, because, because there wasn't an article that I could read and that would answer this question,

[00:32:33] Uri Schneider: so it was like the conversation we're having now.

[00:32:35] Michael Jabbour: Yeah.

[00:32:36] Uri Schneider: Kind of like a spontaneous, unstructured, developing inspiration.

[00:32:39] Michael Jabbour: Well, we totally did not plan for the conversation, so it is

[00:32:42] Uri Schneider: by definition, but No, but I'm saying it's different then, as you said, assistant mode might be like search looking. Information or for answers. Yeah. Partner would be be a thought partner with me. Help me see what I can't see. Yeah. But this would be like a spontaneous conversation of exploring and just riffing on where things go.

[00:33:00] Michael Jabbour: That's right. Yeah. And it's at a certain point that'll also happen with movies and music and other areas.

[00:33:05] Uri Schneider: Explain that.

[00:33:06] Michael Jabbour: So we talked about

[00:33:08] Uri Schneider: this. You were talking about how your kids may not know the difference between human generated music and AI generated music.

[00:33:14] Michael Jabbour: Yeah, they can't tell. But, but also what I figured out is that I, I showed some adults, the AI generated versions of my music and they also couldn't tell.

[00:33:24] Uri Schneider: Yeah.

[00:33:25] Michael Jabbour: Which I thought was totally fascinating. I was like, so what's the value of me playing?

[00:33:30] Uri Schneider: You could ask what's the value? And you could ask like, what's the loss?

[00:33:35] Michael Jabbour: All, all those things.

[00:33:36] Uri Schneider: Yeah.

[00:33:36] Michael Jabbour: Right. So yesterday I was with my daughter and, um. We were just playing and she was like, can you put music on? I was like, sure.

And 'cause sometimes we'll dance and, you know, break out 5-year-old dance parties are always fun. And uh, she was like, but I want your, your music. Can you put mu your music on? And my, my kids don't usually ask for my music from like 20 years. They don't ask stuff. I know. They don't, they're like, it's like, can I read that really?

Okay. Sure. Yeah. Can I read that article you wrote, Papa? No. Um, um. But she asked my music and I was like, okay. But then she, and then she followed up on her own. She, she's just about six now, um, in a few weeks.

[00:34:13] Uri Schneider: She's the COVID baby.

[00:34:14] Michael Jabbour: She's the COVID baby. And she was like, no, I don't want the computer music. I want your music.

Wow.

[00:34:18] Uri Schneider: Wow.

[00:34:20] Michael Jabbour: This was just last night.

[00:34:22] Uri Schneider: So is it that she couldn't, she could or could not tell the difference or she she was requesting the real thing and asking you to give her the real thing?

[00:34:30] Michael Jabbour: I think that she couldn't tell the difference. At a co a basic level. Yeah. Cognitive, emotional level. But I think she could feel the impact differently.

[00:34:43] Uri Schneider: So she wouldn't be able to tell you this was generated by ai or this was generated by my father, but,

[00:34:50] Michael Jabbour: but something

[00:34:51] Uri Schneider: about it was seeking, she was seeking the impact.

[00:34:54] Michael Jabbour: Yeah.

[00:34:54] Uri Schneider: Or the transmission of, this is the music my father made.

[00:34:57] Michael Jabbour: Yes. And

[00:34:58] Uri Schneider: could she tell the difference in her experience, if not for asking you to provide it and trusting.

Trusting your provision.

[00:35:04] Michael Jabbour: I didn't prompt her and also didn't ask her what music she wanted. Right. So she just asked for that out of the blue, which was, it was very sweet and I felt very moved by it. But I also, the

[00:35:15] Uri Schneider: lesser father might have delivered the AI music.

[00:35:17] Michael Jabbour: Right.

[00:35:18] Uri Schneider: It's experiment.

[00:35:19] Michael Jabbour: Totally. Yes. There's a lot of experimentation here.

Uh, and I do think that you can get deeply impacted by media that's been crafted in a certain way. You can get deeply impacted by an audio that is generated by music. I believe that. Right. It will, it can bring you to tears. It can move you an emotionally way. It can, and I can tell you some interesting stories there too about, um, about music, but I feel

[00:35:40] Uri Schneider: like that's a big, that's a big inflection point that we passed in the past couple months.

[00:35:44] Michael Jabbour: Yes.

[00:35:44] Uri Schneider: Like creative, the ability to apply AI into creating art.

[00:35:49] Michael Jabbour: Yes. And I,

[00:35:50] Uri Schneider: music, film, visual arts

[00:35:52] Michael Jabbour: and, and I think there's a lot of fear in the community out there about it. And by the way, there's a whole industry out there that's just fud. Fear, uncertainty and doubt around ai. And anyone who's listening to this, I would advise that you stay far away from that industry.

[00:36:06] Uri Schneider: Where do they park themselves?

[00:36:09] Michael Jabbour: Pretty much everywhere. I mean, Stanford, I think, have evaluated that it had a 30% impact on financing and other types of support areas that people give fear, uncertainty, and doubt based on nothing, even just, just based on no real evidence. Um,

[00:36:24] Uri Schneider: interesting to look at the overlap of the people with FUD around ai.

Compared to the general FUD people.

[00:36:30] Michael Jabbour: Yeah,

[00:36:31] Uri Schneider: yeah.

[00:36:32] Michael Jabbour: Well, the general fud, the most, like known, well-known FUD is like the old, old IBM fud. I wouldn't say it's today's IBM, but maybe, I don't know. Um, but that, that idea of you'll never get fired for making this big purchase decision.

[00:36:45] Uri Schneider: Mm-hmm.

[00:36:46] Michael Jabbour: Right. We're the best in our class.

And so like, just, just programming someone like that is a very particular type of marketing.

[00:36:57] Uri Schneider: Yes.

[00:36:58] Michael Jabbour: And so like I, I, I think that creatives have an, a lot to look for, a lot to look forward to of being more creative, having more to someone to explore with. Like, I don't know,

[00:37:09] Uri Schneider: most people listening are like thinking this is very threatening to creative.

So explain that.

[00:37:13] Michael Jabbour: Yeah. So like, but, but how.

[00:37:14] Uri Schneider: Thinking about the creative is playing a somewhat different role.

[00:37:17] Michael Jabbour: Yeah. So when I'm thinking, when I'm playing guitar, for example, like I'm, I'm, I, I feel my music, like I feel it like. Like vibrating against my chest. I can like connect with whatever it is that's being produced right now.

Do I always have someone that can play the buca or that can, can somehow like just jam with me? No, I don't always have people that can jam with me. And yes, I do want people to jam with me all the time. And if I could be playing music all the time, I might, but that's not something I can afford to do right now.

So is

[00:37:47] Uri Schneider: that partner mode or That's explorer mode.

[00:37:49] Michael Jabbour: I think it's a combination of partner and explorer mode, right? Because part when at the edges of the partner mode, you start to explore.

[00:37:56] Uri Schneider: Yeah.

[00:37:56] Michael Jabbour: With your partner

[00:37:57] Uri Schneider: Coex exploring.

[00:37:58] Michael Jabbour: Right? And so I, I think it's the edge of partner and you're now moving into something where you're exploring, where you can see.

Like I had an idea for some art that I wanted to put on a particular wall, and I'm not necessarily a fantastic artist. I can do stick figures like anyone's business, but like beyond a stick figure, you're already

[00:38:14] Uri Schneider: light years beyond me.

[00:38:15] Michael Jabbour: I beyond the stick figure, I don't know. Um, but I, I had in my mind like a visual, I had in my mind an art piece that was unique that I had never seen before that I wanted to create.

And yes, I did go into ChatGPT to create it. And you know what was interesting? I didn't get it the way that I wanted to get to, but what I ended up doing was going to Claude, which is a highly visual ai. I described to it what I wanted and what I was dreaming and visualize visualizing. It created the AI prompt.

Yes. For ChatGPTI

[00:38:49] Uri Schneider: do that all the time.

[00:38:50] Michael Jabbour: And then it created this thing that was in my mind

[00:38:53] Uri Schneider: and it's pretty wild for people that don't realize this. How about could you do this? Could you, like there's a style of, there's a style of basketball that's east coast and there's a style that's West Coast and you could describe as like faster paced, more fast breaks, less half quartz sets.

That's West Coast. East Coast is much more slow it down, you know. Half court sets and so on. Just a broad stroke for people out there. Could you like describe the qualities or characteristics for good and for good, for good and for bad of uh, cha GPT, which is one leader, um, which is open ai, and then you've got Claude, which is Anthropic, and then you've got co-pilot, which is Microsoft and any others.

But could you give like a little bit of a character. Character profile.

[00:39:37] Michael Jabbour: Sure. So Microsoft Copilot uses both.

[00:39:39] Uri Schneider: Mm-hmm.

[00:39:40] Michael Jabbour: Firstly, um, and I would say that that is designed for the safest type of use, both for kids and for adults and in an enterprise setting. So. As far as the safe for work, good for work, uh, ais I think copilot stacks the highest.

Um, it, it, it also understands constructs very, um, more natively, uh, like what a teams transcript versus like a Word document and how to navigate those things. Um, like I find copilot in teams to be fantastic. Mm-hmm. I think it's excellent at recording meeting notes in context and can answer great questions.

So. That's my go-to for that. Um, work, right? When it comes down to, um, chat, GBT versus Claude, both very interesting chat. GBT has got, uh, you know, over 80% of the market share. So like there. You could, the, the easiest way to think about it is that they're great at 80 to 85% of the tasks. So like, you know, you go in there, you're like, Hey, look this thing up.

Do this thing, fix this assistant

[00:40:41] Uri Schneider: mode,

[00:40:42] Michael Jabbour: fix the grammar assistant mode. It is great at assistant mode. Um, there's also pro models that can do very, very deep vertical thinking. Yeah. That's like GT five PRO for example.

[00:40:51] Uri Schneider: Right? If you took all the ChatGPT users, I think there's a very, very small portion they're using it that way, generally speaking.

Sure. Like you said, as the most market share. And a lot of people, would it be fair to say most people are using it for, for assistant tasks?

[00:41:04] Michael Jabbour: Yeah. Yeah. Assistant tasks, assistant mode searching even.

[00:41:06] Uri Schneider: Yeah.

[00:41:07] Michael Jabbour: Right. So searching, helping, um, assistant feedback, all that stuff. Um, all the partner. Um, kind of mechanisms that does really, really well.

Um, I feel like Claude is a,

[00:41:21] Uri Schneider: Claude is my people. Just for the record. So I do have a bias. I'm super interested in hearing how you typify, characterize Claude.

[00:41:29] Michael Jabbour: Yeah. Um, I Relationships, my people. Your people. It's all, you know,

[00:41:33] Uri Schneider: I, no, look, even in ai, we're gonna have like tribes.

[00:41:35] Michael Jabbour: Yeah. We totally, I totally get, get it.

I totally get it. Try

[00:41:37] Uri Schneider: Gray ai.

[00:41:38] Michael Jabbour: I totally understand that. And, and, and agree like we're gonna have, um, a lot of that. But it's an

[00:41:43] Uri Schneider: open tent. Like you could eat

[00:41:44] Michael Jabbour: Yes. You could eat

[00:41:45] Uri Schneider: kosher and halal under one tent. We can do co-pilot and Claude and Chachi Petit all under one tent.

[00:41:50] Michael Jabbour: Yeah, that's my tent. My tent's pretty large.

I include other models too. Um, but I, but I do think that Claude is a, um. A deeper thinker, not in the sense of sense of like just vertical depth. Mm-hmm. But I feel like the way that it approaches a problem is, uh, very. Holistic or robust. And it like will catch things that I will catch or it will, um, emphasize something I will emphasize versus like, just what is the, what is the most appropriate thing for this setting, which to me feels like a great average.

[00:42:28] Speaker 3: Mm-hmm.

[00:42:29] Michael Jabbour: Right. Um, this will go to the edge a little bit more and say. These, these are, these are ideas that I'm thinking seeing. I also find Claude to be a much more visual model. So

[00:42:42] Uri Schneider: not in producing visuals.

[00:42:43] Michael Jabbour: Producing visuals. Also front ends for For front ends.

[00:42:46] Uri Schneider: Yeah.

[00:42:46] Michael Jabbour: So front ends for applications, I think most are

[00:42:48] Uri Schneider: think though, but making like cartoons and memes.

[00:42:50] Michael Jabbour: No. So it doesn't do that. It doesn't generate images like that visual.

[00:42:53] Uri Schneider: Right. Like code for front end.

[00:42:54] Michael Jabbour: Yeah. But like I, I, for substack, my substack, I generally like to include some sort of AI generated image. Well, I

[00:43:00] Uri Schneider: thought you drew those.

[00:43:01] Michael Jabbour: No, I, uh, definitely not. I will, um, I will ask Claude to think through the problem and generate the prompt in order for ai and I actually connected them together.

Yeah. So Claude can now use OpenAI to generate the image that it thought of.

[00:43:15] Speaker 3: Yep.

[00:43:16] Michael Jabbour: So, um, I do that regularly and so I, I, I find that Claude is, um, has that, that level of depth, but it also, um, doesn't do a great job at like managing its tokens and totally managing large documents. Terrible. Some, some of the, the, uh, surrounding ways that it manages artifacts, I feel like.

Grow and we'll get there, but I'm not there yet.

[00:43:39] Uri Schneider: Yeah.

[00:43:39] Michael Jabbour: Um, but even in the development area, like Claude code is excellent at developments. Yeah. On the, on the coding side,

[00:43:46] Uri Schneider: I think of it as like, when would you use a journal or when would you use an iPad or when would you use a laptop? They each have a time and a place.

It's not one is good Exactly. And one is bad.

[00:43:55] Michael Jabbour: That's exactly right. And we're not even in the era of like flavored AI yet, but. We'll get there.

[00:44:01] Uri Schneider: What would your flavor be?

[00:44:02] Michael Jabbour: Um, so I think my flavor would be, uh, very eclectic. Kind of like me. No

[00:44:07] Uri Schneider: surprise,

[00:44:07] Michael Jabbour: I think. Right? Like I, I, I would want it to like. Really push hard on the edges, the things that were like less average, that were less common.

Uh, and to be able to report back to me on something and say, Hey, yeah, I was just thinking about this odd thing. Like I, I, I wanna hear those, like when I'm with students, I don't, I don't need them to, to give me the, the answer that I'm expecting.

[00:44:32] Uri Schneider: Right.

[00:44:33] Michael Jabbour: Right. Uh, by the way, a great, uh, great teaching tip that, um, one of my friends at Microsoft picked up 'cause she's a teacher.

I, I'm not in that respect, like a traditionally trained teacher.

[00:44:43] Uri Schneider: Well, I'm your student, so that makes you a teacher.

[00:44:45] Michael Jabbour: Okay, fine. So I'm a teacher for this minute. Um, and I, uh. She said to me a long time ago that when I was giving keynotes and lectures at the end I would ask, um, you know, does anyone have any questions for me?

And I would get like, minimal questions. I would get some who would be courageous enough to jump in and be like, I have no idea what I'm doing, but here's what it is. She just made like this one or two word correction to say, what are your cur, what are your questions?

[00:45:14] Uri Schneider: Mm-hmm. Does anyone have any questions?

Nope. I just got an out.

[00:45:18] Michael Jabbour: Right. You

[00:45:19] Uri Schneider: say, what are your questions means? I believe you have a question.

[00:45:21] Michael Jabbour: I know that you have a question. I have faith. I trust that you have a question. What is it? Right? And being able to extract that kind of thinking. And, uh, you know, and even a lot of my keynote, it's are all questions are more questions than there are answers.

[00:45:40] Uri Schneider: Yeah.

[00:45:41] Michael Jabbour: Right. So.

[00:45:43] Uri Schneider: The art,

[00:45:43] Michael Jabbour: I

[00:45:43] Uri Schneider: want that the art of the Good question.

[00:45:45] Michael Jabbour: Yes. And I, I, I, I, I, I want an AI that is gonna do more of that with me, for me.

[00:45:51] Uri Schneider: Mm.

[00:45:51] Michael Jabbour: To me, right. To ask me those questions, to be able to, to partner with me in that mode, to assist me in that mode. And so, um, you're gonna have those types of flavored ais that are.

Um, unique to a person's context, to their, to their life story, uh, to what they're trying to achieve, how they're trying to achieve it. And, um, we're seeing, uh, just the opening act of whatever that might be.

[00:46:18] Uri Schneider: A good analogy I think of is like, you get a car, we're here in New York City, you get a lot of different.

Rain, snow, heat. We don't have a lot of sand dunes, but you know, you've got like different sports mode, power mode, economy mode. So these could be thought of as like different ways. You could take the same machine and flavor it or you know, dial it up or dial it down. Change the settings for different purposes, different terrain.

[00:46:39] Michael Jabbour: Yeah,

[00:46:39] Uri Schneider: that's what you're talking about.

[00:46:40] Michael Jabbour: Yeah, definitely. And, and we do have things that allow, will allow you to have it think more deeply.

[00:46:45] Uri Schneider: Right.

[00:46:45] Michael Jabbour: Um, you know, like hard thinking versus soft thinking, but like, or fast thinking versus slow thinking. Yeah. Better way to put it.

[00:46:51] Uri Schneider: It's very interesting 'cause it's counterintuitive.

Generally we keep buying and upgrading devices with more ram and quicker responses and more snappy. But one of the things I like about Claude, it thinks there's a lag between the prompt and the response. And when I go to Cha GPT. It's like, I'm like, don't be so snappy. Don't be so snappy. Even though like I'm used to everything else being like a microwave, you know?

Yeah. Let's go 30 seconds cooked.

[00:47:17] Michael Jabbour: Yeah.

[00:47:17] Uri Schneider: But, um, it's fascinating that that's like baked in and it feels really good.

[00:47:22] Michael Jabbour: Yeah. And you can get chat GBT to do that other totally model. Um, you could do it and

[00:47:26] Uri Schneider: just like that, it's organic and natural in Claude

[00:47:29] Michael Jabbour: y

[00:47:29] Uri Schneider: in a, in a, I'm saying that in a provocative way.

Totally.

[00:47:32] Michael Jabbour: Totally agree with you. Yeah. I, I

[00:47:33] Uri Schneider: like that's the default and it sets the tone and the experience.

[00:47:36] Michael Jabbour: Yes. And I think you have to look at also who it's serving.

[00:47:39] Uri Schneider: That's right.

[00:47:40] Michael Jabbour: Right. Like what is the most tasks that are done and what do people mostly want in chat, GBT versus mostly what do they want from Claude?

Right. So like for me, I don't do them as competitive as at all. Like I, I'm using them. To Totally in partnership. I have them partner with each other

[00:47:58] Uri Schneider: totally

[00:47:58] Michael Jabbour: continuously.

[00:47:59] Uri Schneider: It's like consulting with people on both sides of the aisle.

[00:48:01] Michael Jabbour: Yeah, that's right.

[00:48:02] Uri Schneider: What would you say as we come to the end, what would you say is like the most exciting, promising application that you either are currently using it for or look forward to seeing how it can solve problems in the world, and then contrasting that with some of the greatest concerns or risks.

Of what can go wrong.

[00:48:20] Michael Jabbour: Sure. So, tiny topic to end on.

[00:48:22] Uri Schneider: Small topic. Yeah. Just like one anecdote was top of mind. We won't go too deep.

[00:48:26] Michael Jabbour: Right. So, um, three areas.

[00:48:30] Uri Schneider: One thing I would say to preface, we might've even sat in the same lecture where it was a, it was a discussion about, it was a, it was a discussion about human beings.

And if we look at, let's say the Holocaust, so Hitler demonstrates the full extreme. Of evil and destruction and the worst of what human beings can do to other human beings. And this person, I think it was Usher, Wade, uh, was talking about the fact that he also teaches us and inspires us, that if he could take it this far to the side of evil, then we could take it this far to the side of good.

And I think about that with the internet, and I think about that with technology, and I once heard someone else say, it's like kitchen knives. Everybody has kitchen knives in the kitchen, but you better watch how the kids are using 'em or something. Dangerous could happen, but no one would cook without kitchen knives.

So anything that has a power for the good also has the risk of doing harm. So without being a flutter, without being a flutter, seeing the full potential, but recognizing we have to. Be intentional.

[00:49:29] Michael Jabbour: I'll never call you a flutter. Don't worry.

[00:49:30] Uri Schneider: Thank you. I'm worried about people that are listening that are flutters.

Some of the people that listen to this are flutters.

[00:49:34] Michael Jabbour: I, I hear you.

[00:49:34] Uri Schneider: Don't be a flutter.

[00:49:35] Michael Jabbour: Don't be a flutter. I agree. Don't be a flutter.

[00:49:36] Uri Schneider: What's the opposite of a flutter?

[00:49:37] Michael Jabbour: I'm not sure, but an optimist.

[00:49:40] Uri Schneider: Fear, uncertainty.

[00:49:43] Michael Jabbour: Doubt

[00:49:44] Uri Schneider: and doubt. So we'll think of the opposite. You know, comment on this. Let us know what you think.

[00:49:48] Michael Jabbour: Yeah, I, um, okay. Three areas.

[00:49:52] Uri Schneider: Yeah.

[00:49:52] Michael Jabbour: In society, the areas, at least I pay a lot of attention to, uh, work. Medicine education.

[00:49:59] Uri Schneider: Yeah.

[00:50:00] Michael Jabbour: So as, uh, our CEO Satya said several times, this is the first time in history where it's very possible that every person could have a Stanford doctor, an educator in their pocket.

[00:50:13] Speaker 3: Mm-hmm.

[00:50:14] Michael Jabbour: We're at that point in history. Right. Smartphones are ubiquitous pretty much everywhere in the world. And, um, access to the internet is becoming more ubiquitous everywhere. You're gonna be able to have access, um, access to knowledge and information, um, in a way that has never been seen before and is, and will make the internet look very small.

[00:50:38] Uri Schneider: Say that last part again.

[00:50:39] Michael Jabbour: So when it comes down to the access for information, right, the access to, um, to getting educated, to growing in that, in all those ways. You're going to see the, the information that was distributed in the internet will be small in comparison. It, it will be microscopic in comparison to what you're seeing right now.

Right now, non three years from now. Right. Just the, the more that you access it, I think right at this moment when we're accessing all these ais. Remember that the aperture is this big. And so it's like trying to extract the world's knowledge through the mouth of a five-year-old and the mind of a five-year-old.

That's the current status. So in a world where everyone can have a top doctor and a top educator, that just changes everything about what we're trying to do in terms of having, um, a better society. A, um, a positive outcome where people aren't trying to take themselves, um, take themselves out. Instead, they're building, building each other.

You know, there's a great, um, this, uh, it's not a, it's not a story, it's like real life. But, uh, crabs, when crabs are in a bucket, they never have to cover a crab bucket because they're all holding themselves down. They never actually escape the bucket. So I view like our society to some degree, many parts of our society, like a bucket of crabs.

It doesn't require covering. And so here we're in a place where we could actually build each other in ways that we can't even yet start to predict. And that's going to be on, I would say the medicine and education side, which by the way, are very related to each other, like health outcomes, your educated society, et cetera.

Um, when we're looking at work. I do think the work is going to change. I think it is going to, um, we're be gonna be able to lean into work in a very meaningful way. Um, I do think that certain jobs will go away and many jobs will come also in terms of the way that we can now, um, leverage human potential.

And you're going to see compounding and precision at scale happen in a way that even Ford and man and that type of manufacturing could never have ever envisioned. Right. So you're, you're going to see change, um, in those three dimensions, um, and potentially for the better.

[00:53:13] Uri Schneider: You know what, I'm gonna modify my other side of the question.

Instead of the, it's not talking about dystopian fear, but more like what are the ways that today people are engaging with ai, which is just minimalistic and, and limited and kind of capping the value they can get out of it.

[00:53:30] Michael Jabbour: So. I like that you reframed that and thank you. 'cause I, I, I think the dystopian ideas are, are only helpful in very specific scenarios, right.

Uh, I, I do think that, um, where people can lean in, well, first of all, the, the worst case here is that people don't get access. So that's the most dystopian future

[00:53:53] Uri Schneider: inaccessibility.

[00:53:54] Michael Jabbour: Inaccessibility,

[00:53:55] Uri Schneider: right? Or uneven accessibility.

[00:53:56] Michael Jabbour: Yeah. Yeah. Just, just not having access to the models. Right. Um, unevenness will eventually even itself out, I think, but, um, but no access at all is a problem.

And, uh, as far as where people need to lean in and they're probably not leaning in is just how far can I push this to help me? Right. So ask the ais to ask you 10 questions in the, in, in, in amid, amidst the prompt or in this conversation, in this dialogue.

[00:54:23] Uri Schneider: I think the example you gave that I've come to is also asking Claude to help create a prompt to prompt ChatGPT.

But you could do it within a model, like you're saying, like, help me flesh this out. What 10 questions should I be asking?

[00:54:35] Michael Jabbour: That's right.

[00:54:36] Uri Schneider: Yeah.

[00:54:36] Michael Jabbour: Yeah. And I, I, I think that. Another area that people can, can lean into is, um, looking deeper into their own insecurities around things. So the, there's a great Leland quote that, um, I talk about in some of my, my lectures around, uh, that a person can basically only grow to the proportion that they can, um, accept truth about themselves without running away.

And one of the things that I've seen with teaching so many people how to use these AI in person is that these tools are great communicators, generally speaking. And, um, humans are mediocre communicators, generally speaking, some are terrible. And so

[00:55:25] Uri Schneider: speaking and listening

[00:55:26] Michael Jabbour: both

[00:55:27] Uri Schneider: sides,

[00:55:27] Michael Jabbour: both, both, both those things,

[00:55:28] Uri Schneider: we don't like to hear everything that we have to hear.

[00:55:30] Michael Jabbour: Correct? Um, me, myself included, and, uh, I, I think that. If, if we can lean into those areas of vulnerability, that human messiness to like, understand, like, why, why am I thinking about something? Yeah, I'm probably being stubborn. I'm probably like, like leaning in in a way that might be too heavy or inappropriate or, um, I'm not leaning in enough.

So just being able to come face to face with who you are and how you operate and what that means to, um, those around you is gonna be an incredible unlock for a lot of people.

[00:56:05] Uri Schneider: Imagine you had a billboard. You could take a billboard in that, uh, in Times Square. What would be your slogan? What would be your message?

[00:56:15] Michael Jabbour: Do good and be good.

[00:56:17] Uri Schneider: Do good and be good. This has been awesome. This was really good. Thanks for coming.

[00:56:21] Michael Jabbour: Thank you. Thank you for having me. It's so good to see you.

[00:56:24] Uri Schneider: I hope you enjoyed this episode. If you did, share it with a friend, and if you wanna get more tips and follow us for more insights, check out transcendingx.com/email And remember, keep talking.